An Architecture for Fast and General Data Processing on Large Clusters: A Summary and Application in Academic Writing

The seminal paper "An Architecture for Fast and General Data Processing on Large Clusters" by Matei Zaharia et al., which introduced the Resilient Distributed Datasets (RDD) model and the Spark framework, has become a cornerstone of modern big data computing. For students and researchers, particularly those seeking resources like "其它文档类资源 CSDN下载 代写英语论文" (Other document resources, CSDN download, English paper writing service), understanding this architecture is crucial not only for technical knowledge but also for crafting high-quality academic work in computer science and data engineering.

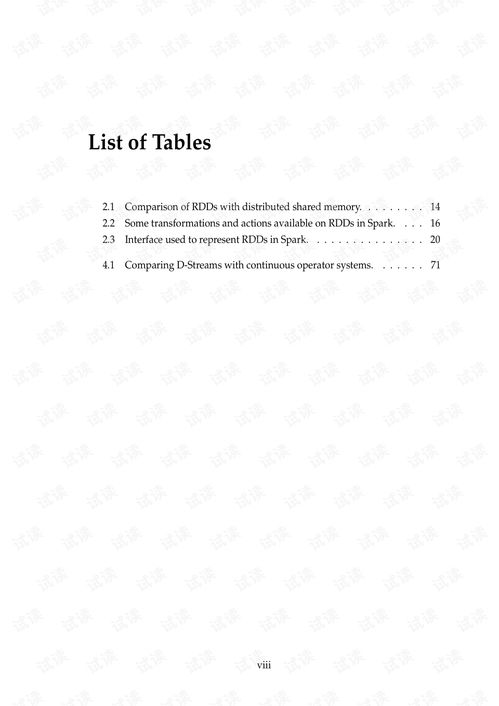

The core innovation of this architecture is the RDD, a fault-tolerant, parallel data structure that allows in-memory computation across a cluster. Unlike the disk-based, two-stage execution model of MapReduce (e.g., Hadoop), RDDs enable iterative algorithms and interactive data analysis by persisting intermediate results in memory. This leads to orders-of-magnitude speed improvements for many applications, such as machine learning and graph processing. The architecture's generality stems from its ability to express a wide range of parallel computations through a small set of coarse-grained transformations (like map, filter, join) and actions.

For students writing English papers on distributed systems, this paper provides an excellent case study. A well-structured paper could follow this outline:

- Introduction: Pose the problem of slow, disk-based processing for iterative and interactive workloads in existing frameworks like MapReduce.

- Background & Related Work: Briefly explain MapReduce and its limitations, setting the stage for the need for a new architecture.

- Core Architecture – RDDs: Detail the RDD abstraction, its properties (immutability, lineage), and how lineage enables fault recovery without replication.

- The Spark Framework: Describe how Spark implements this architecture, including its programming model (driver/executors), scheduler, and memory management.

- Performance Evaluation: Summarize key experiments from the paper showing performance gains over Hadoop for iterative algorithms (e.g., Logistic Regression) and ad-hoc queries.

- Discussion & Conclusion: Highlight the impact of this work (the rise of Spark), its limitations (e.g., for fine-grained updates), and future trends.

Regarding the search terms provided:

- 其它文档类资源 CSDN下载: While platforms like CSDN may host summaries, slides, or translations of this paper, the primary and most authoritative source is the original academic publication (e.g., via ACM or USENIX). Always prioritize primary sources for academic writing to ensure accuracy.

- 代写英语论文: It is critical to emphasize that directly outsourcing paper writing (代写) is unethical and violates academic integrity principles at virtually all institutions. The purpose of understanding such architectures is to develop one's own expertise and writing skills. Use the original paper and other legitimate research (Google Scholar, IEEE Xplore) as references to support your own analysis and synthesis.

In conclusion, the architecture for fast, general cluster processing, as realized in Apache Spark, represents a paradigm shift. For students, deeply comprehending this work provides rich material for technical analysis and demonstrates the process of innovative systems research—a far more valuable outcome for an academic career than seeking shortcuts in paper writing.

如若转载,请注明出处:http://www.lw-sky.com/product/306.html

更新时间:2026-04-13 05:51:29